Not sure this is the most appropriate group for posting above topic … but since it may affect best practices when building large LV applications and NI R&D folks in this group may have a quick answer (or point elsewhere) – I’ll give it a shot …

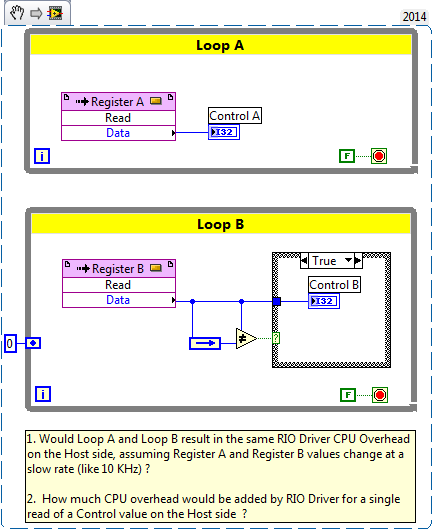

I need to get a better idea of CPU overhead added by RIO Driver as FPGA side code writes values to Top Level VI Controls:

Would CPU overhead be the same in both cases? Or should my FPGA side code minimize number of Control writes if I need minimizing CPU overhead on the Host side? I expect RIO Driver may be using some CPU bandwidth even if my Host side code is not reading control values at all …

There is a really helpful description of DMA FIFO inner workings in the “NI LabVIEW High-Performance FPGA Developer’s Guide”. Unfortunately it doesn't tell much about how RIO Driver implements Host Interface Controls – only mentions that propagation delay is on the microsecond scale and that polling data this way is faster than using FPGA Interrupts (a ball park propagation delay of 25 microseconds- this may be from a different document though).

Any insights from LabVIEW R&D?

Thanks,

Dmitry