- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Retreive data from corrupt TDMS files?

04-01-2010 04:58 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hello

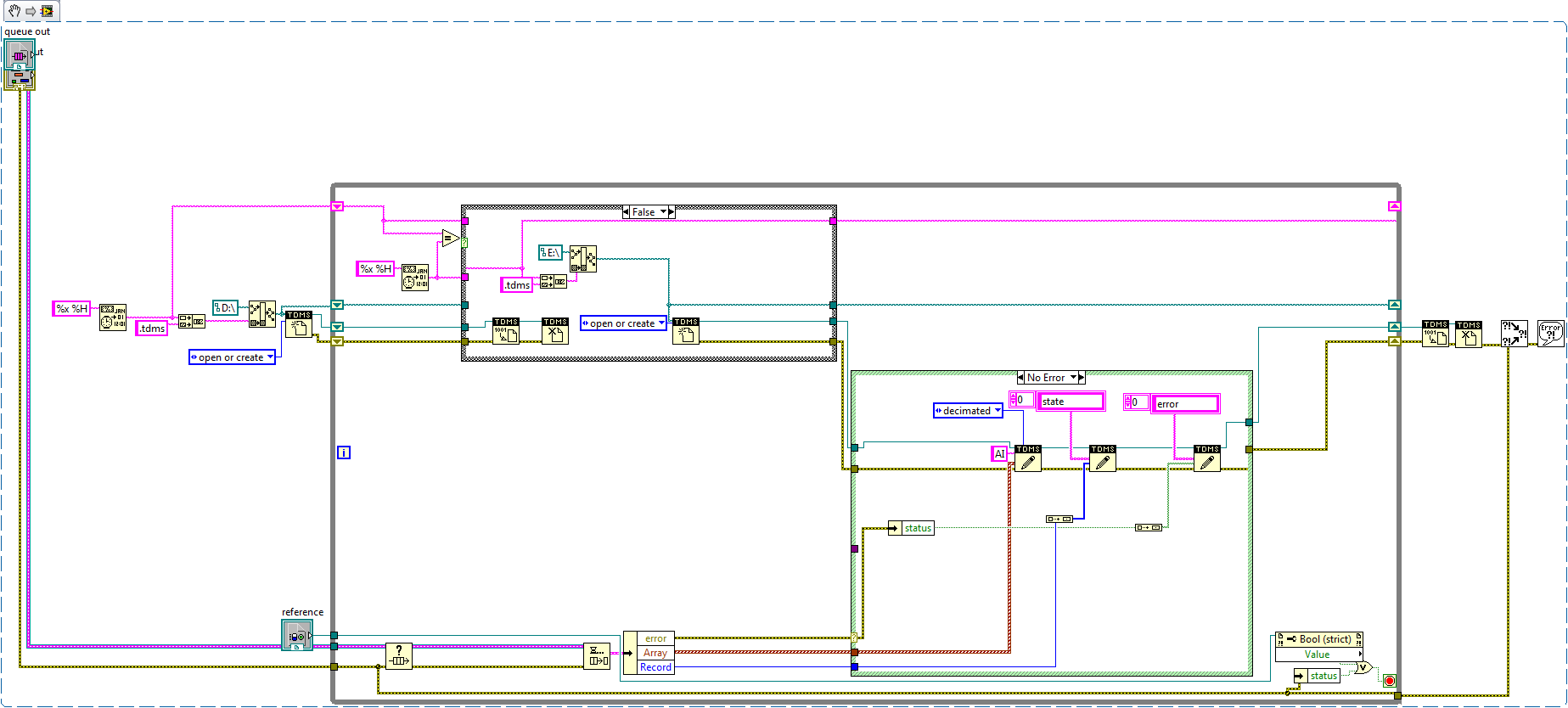

I have a problem with my TDMS files. I have stored data in TDMS for the last week with a new file beeing created every hour to avoid data loss in case of a crash etc. I've used LabVIEW 2009 and the storage procedure is shown in the attached snippet.

The problem is that some of my files are corrupt. When i try to open them in DIAdem or LV i get Error 2511 saying that the file could not be opened since it is corrupt. I have looked around on the forum and found that people who have the same problem have had different versions of TDMS but I use LV 2009 and DIAdem 11.1 so TDMS 2.0 should be supported. Also, some of the files are perfectly ok, it is only about one in three that is corrupted.

The broken files are the same size as the ones that work, so I know that there is data inside them, and I am desperate to get that data out. Is there any way to do this?

Does anyone know what the software problem might be, and why some of the files are broken while others aren't?

Best regards,

Felix

04-01-2010 05:01 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

A larger picture. The previous one turned out a bit small.

04-01-2010 11:27 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hi Felix,

Why do you create the first TDMS file on your 😧 drive and then create all subsequent TDMS files on your E: drive? For those files that you can't load, please try deleting the associated *.tdms_index file and then try reloading that TDMS file. The *.tdms_index file will be recreated when you try to reload the data file. If the problem is in the index file and not the data file, that should quickly fix everything. If that doesn't work, please post one of the problem TDMS files or email it to me at brad.turpin@ni.com.

Let us know,

Brad Turpin

DIAdem Product Support Engineer

National Instruments

05-04-2011 09:34 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hi Brad,

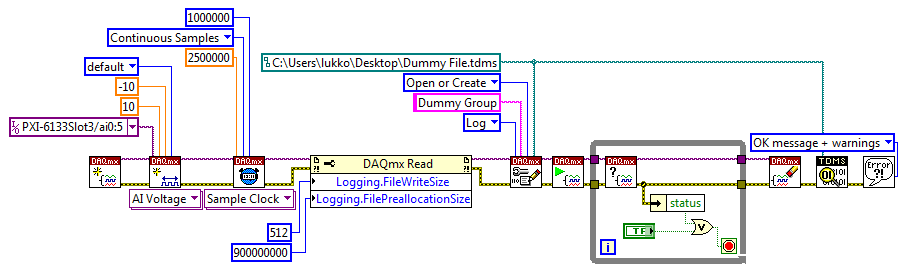

I do not want to start a new post. Maybe you can help me. TDMS files were saved by some of my colleagues using the following structure.

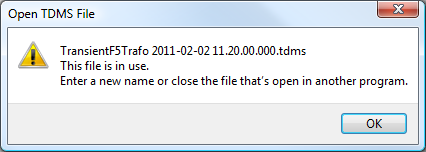

Sometimes measurement process was interrupted. I am not really sure why, because I was not there at that time, but probably due to lack of power supply. Right now when I try to open some of TDMS files I get the following message.

I am slightly confused. Do you have any ideas how to read data from corrupted files? Just let me know if you would like to get more details.

Thanks for help!

--

Łukasz

05-06-2011 08:29 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Brad,

I've been having a problem with the same Error-2511 Corrupted TDMS file. Your suggestion to delete the index file worked like a charm for me, but then the question becomes how do I troubleshoot the root cause? Every time I run a test that is of considerable length (~4 hours @ 0.1 Hz data log of 170 channels), I end up with a corrupted file.

Obviously, in terms of LV product application, my data sets are not all that large. I just don't know how to fix this as deleting every index file manually is not practical.

05-08-2011 08:48 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hi guys,

I have some suggestions for the error code -2511 when using TDMS.

First of all, please go to this place to download the latest released TDMS installer and install TDMS on your machine. Before your installation, you can also back up your tdms.dll in \program files\national instruments\shared\tdms.

http://zone.ni.com/devzone/cda/tut/p/id/9995

Secondly, I have a simple VI to recovery the corrupted TDMS file, but it only works for the situation that only last segment in TDMS file is corrupted. I attached my VI here.

And if either of above can work, please send email to me: yongqing.ye@ni.com with your corrupted file if convenient, I would like to do more investigation.

Yongqing Ye

NI R&D

05-11-2011 05:40 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hi Yongqing,

Thank you for fast response. Actually your VI works perfectly and recovers my corrupted files. I still have one problem with large files. Normally during transient measurements I save events in one file which can have even 10GB. I observed that it is not possible for me to open corrupted files bigger than around 1GB due to full memory.

Could you describe or provide some examples how split large TDMS files into smaller to avoid full memory error? Right now in "Read from Binary File Function" the "count (1)" property is set to -1. I am wondering if it would be possible to read TDMS files with the specific offset and number of samples as in "TDMS Read Function".

Thanks!

--

Łukasz

05-11-2011 12:53 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hi Lukasz,

With a (binary) data file that large, I'd recommend using the "Register Data" context menu in DIAdem NAVIGATOR, rather than dragging the whole file into DIAdem.

The Storage VIs do support reading subsets from channels starting at a particular array index offset and continuing for a certain number of values much smaller than the full channel length. DIAdem supports this channel subset loading as well as loading with automatic data reduction.

Brad Turpin

DIAdem Product Support Engineer

National Instruments

05-12-2011 02:55 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hi Brad,

I have to admit that I do not use DIAdem. All TDMS files I process using my own software. Of course this is DIAdem software board but still corrupted TDMS file is corrupted TDMS file. That is why I decided not to start a new topic about the same.

I would be grateful if someone would advice me how to split corrupted large TDMS files not using TDMS API and later retrieve data using Yongqing's VI. I have to say that I do not fully understand code provided by Yongqing because I do not know the structure of binary TDMS files.

Thanks!

--

Łukasz

05-15-2011 09:20 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hi Łukasz,

TDMS Read node in LabVIEW has the similar terminals as you describe with Binary File Read. TDMS Read has "offset" and "count" terminals, by indicating the values for them, you can also read only part of the data values from the entire large file.

Besides, TDMS currently doesn't have the ability to split into small files automatically. I'm afraid you have to do some programming by yourself to implement this. For example, you can do some bookkeeping of the number of the data values you stream to the file or even directly for the file size, if the number or the file size is larger than a threashold, you can write to a new TDMS file. But of course, that means you possibly need to read from multiple files when reading data.

Thanks,

Yoongqing Ye

NI R&D