- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Measure of time elapsed

Solved!07-13-2009 03:00 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

I've just started using LabView and I'm working on a project in which a sound file needs to be played once a microphone picks up a sound with a frequency above a certain level (I have a sound board right now that allows me to manually make these cues). I'm sure I'll have many more questions in the future, but for right now I was just wondering how I could measure how much time it takes for LabView to read the frequency and then play the file.

Solved! Go to Solution.

07-13-2009 04:35 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

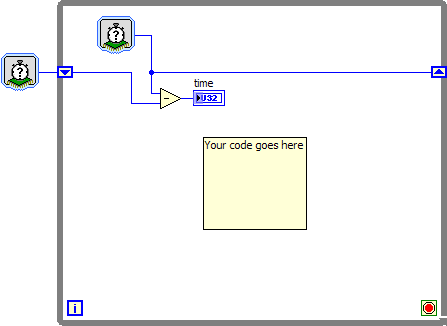

You can get start time and end time by using Tick Count (ms) Function or Get Date/Time In Seconds Function.

Hope it helps!

07-14-2009 01:45 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

07-14-2009 02:11 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

07-14-2009 02:35 PM - edited 07-14-2009 02:40 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

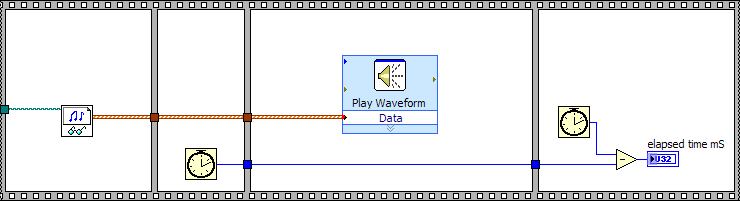

Sorry I'm being that annoying new guy in the forum who just posts questions as he thinks of them and doesn't bother doing work. I figured out the issue and everything seems to be working. I'm consistently getting readings of around 330 ms. Does that seem correct? Seemed a bit slow to me.

Also, out of curiousity, what is that item you're putting the final value into at the end of the process? I've just been using a numeric indicator.

07-14-2009 02:38 PM - edited 07-14-2009 02:42 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

No worries 🙂

Check an example here for playing wave file.

also find attached files.

07-14-2009 02:45 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

08-14-2009 07:55 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

08-14-2009 01:29 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

BenYL,

One quick way to get time output would be by using shift register.

08-14-2009 03:20 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

"Should be" isn't "Is" -Jay