- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

What is the purpose/advantage of Type Cast

Solved!06-14-2023 02:49 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hi Sir,

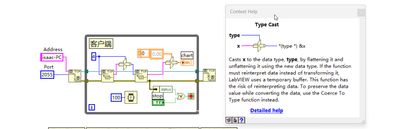

I have a question for the Type Cast, it will change the data type automatically, and make it be compatible ?

For below case, whether we can just use the funtion string to number instead of Type Cast ?

What is the difference ?

Thanks.

Solved! Go to Solution.

06-14-2023 02:55 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

It tells LabVIEW to interpret the underlying binary data as a different data type.

For example, a string is an array of characters, where each character is represented by a U8 integer.

Soliton Technologies

New to the forum? Please read community guidelines and how to ask smart questions

Only two ways to appreciate someone who spent their free time to reply/answer your question - give them Kudos or mark their reply as the answer/solution.

Finding it hard to source NI hardware? Try NI Trading Post

06-14-2023 03:00 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

But if we just use "Decimal String To Number" function, any risk or compatible issue ?

Thanks.

06-14-2023 03:00 AM - edited 06-14-2023 03:02 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

@Brzhou wrote:

Hi Sir,

I have a question for the Type Cast, it will change the data type automatically, and make it be compatible ?

For below case, whether we can just use the funtion string to number instead of Type Cast ?

No you can't!!! They are completely different operations!

String to Number looks at the string and parses a decimal number in string form into a real number.

Typecast simply uses the 4 bytes in the string and interprets them directly as a 32-bit integer (and specifically formatted in big endian order).

Just connect that string you get from the first TCP Read to a string control and look at it. You will see gibberish. That is because the bytes in that string do usually not form valid ASCII codes.

06-14-2023 03:34 AM - edited 06-14-2023 03:46 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

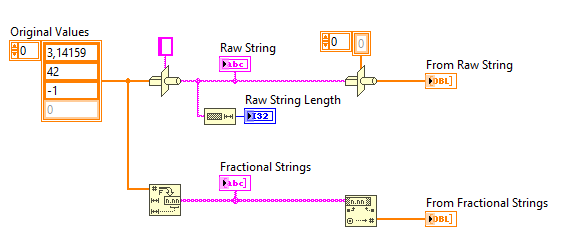

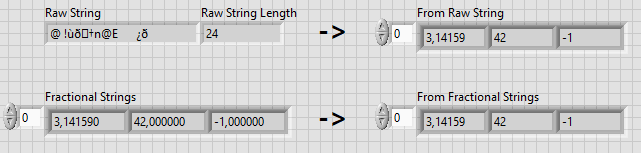

Try for yourself and you will see the difference:

Here for example the Type Cast flattens the values to 24 bytes because I have 3 values, and each value is an 8-byte floating point number (double). Type Cast is often used for flattening/unflattening communication frames because this is compact and efficient.

On the other hand, the Number to String function turns the numbers into human-readable ASCII representations. This is often used for displaying values in user interfaces or producing human-readable configuration files.

Regards,

Raphaël.

06-14-2023 04:09 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

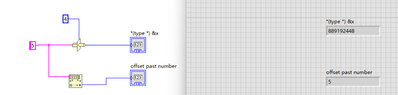

Yes, Just now, I give a try, not sure why the "Type Cast" transfer string "5" to 889192448, thanks.

06-14-2023 04:51 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

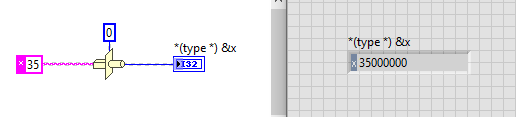

Because Type Cast reinterprets the raw bytes.

If you change the Display Style of the string constant to "Hex Display" and the radix of the numeric indicator to "Hex", you will see this:

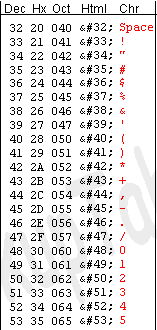

Your string "5" is actually stored in memory as a byte of value 0x35 (here expressed in hexadecimal). Why 0x35 ? Because this is the value associated to the character "5" in the ASCII table.

So the value 0x35 is directly copied to the raw representation of the I32. Since an I32 has 4 bytes, LabVIEW completes with 3 zeros to make the final value: 0x35, 0x00, 0x00, 0x00.

06-14-2023 06:38 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Thanks.

I see, sounds like the same as yours now.

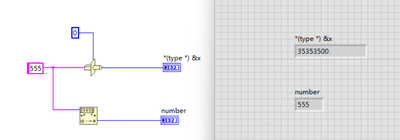

So in this way, the Type Cast convert the string to ASCll char by char, and fill the bit on the right side with "0" to match the length, at most 4 bytes, thanks.

06-14-2023 06:49 AM - edited 06-14-2023 06:49 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

06-14-2023 06:49 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hi Brzhou,

@Brzhou wrote:

So in this way, the Type Cast convert the string to ASCll char by char, and fill the bit on the right side with "0" to match the length, at most 4 bytes, thanks.

A TypeCast does NOT change the underlying data/bytes (the memory content), but just interprets those data/bytes differently: the 4 bytes of a SGL value can also be interpreted as the 4 bytes of an U32 or I32 value, but all three represent different values.

Any other conversion function (like ScanFromString, Convert FromFractionalString, or ToI32) will re-interprete the value of that item and will change the underlying data in memory. A SGL value of "1.0" consists of the 4 bytes 0x3F 0x80 0x00 0x00, while the I32 value of "1" consists of 0x00 0x00 0x00 0x01.