- Document History

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report to a Moderator

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report to a Moderator

- INTRODUCTION

The system consists of an intuitive way in which a locomotion conveying device such as a robot can be controlled by virtually no physically at all.

Such a setup would not only facilitate below neck paralyzed patients to control hardware such as electronic wheelchairs or robotic arms, but also enable any fully abled person to control systems telepathically without lifting a finger. In the long run, such a system can be coupled with existing manual control systems to make them more efficient and safe. Neurofeedback to the individual in the form of visual or haptic stimulus would be given so that they can control their brainwave output more easily and enable a more efficient control system.

Summary of Contributions:

- Detecting voluntary multiple blinks from raw EEG wave along with separation of natural blinking.

- Mapping attention levels deduced from raw EEG through simple signal processing to control output magnitude

- Combining blink and attention magnitude data, along with visual Neurofeedback for an intuitive final control system.

2. BCI control system

The control layout employed in this paper consists of four main features: The BCI, the signal processing system, the control output system and the visual Neurofeelback system.

The BCI used here, is the NeuroSky MindWave Brainwave Headset. It extracts the raw EEG data from the scalp in an noninvasive manner and transmits it via Bluetooth to the processing unit. The device consists of a headset, an ear clip, and a sensor arm. The headsets reference and ground electrodes are on the ear clip and the EEG electrode is on the sensor arm, resting on the forehead above the eye.

The most unique aspect on this particular BCI as compared to others available in the market is that it incorporates dry-sensors for its electrodes without the need for any conductive gels to be applied on the skin and was the paramount criteria for the selection of this equipment over others.

The single sensor on FP1 site (above left eyebrow) provides a high degree of freedom; it can measure multiple mental states simultaneously, and its close proximity to the eye enable it to detect Electromyography (EMG) signals created by eye blinks.

This enables us to process various variables that can be formulated into a control algorithm in the processing unit from which an efficient control system can be derived.

2.1 Communication of BCI

The Neurosky Mindwave BCI sends brainwave and debugging information wirelessly through Bluetooth communication. The device creates a virtual communications port (COM port) and sends data serially by serial over Bluetooth communication protocol.

The Think Gear Communications Driver (TGCD) is a device driver with a simple API that allows

communication between an Application on a computer and a Think Gear chip (the ASIC chip embedded inside the Mindwave headset).It is available as a .dll Using the documentation provided by Neurosky along with the driver it is possible to create standalone applications on a computer or mobile platforms.

2.2 Hardware-Application Interface and Debugging

The use of LabVIEW graphical programming environment enables for a platform for easy development of complex features along with error handling capabilities as well as to ability to build an intuitive and interactive interface with the user.

LabVIEW communicates with the BCI using the TGCD driver with the ability to receive data pertaining to signal strength as well as error information from the headset and display it on the computer screen.

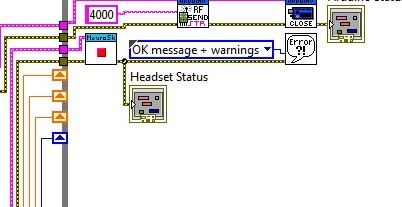

Fig 2.2 shows simple error handling VI’s to stop the program and output error information to the screen in the case of an error before or during execution of the program.

This enables for easy and fast debugging, allowing for a user friendly interface.

Fig2.1 Error Handling in the VI

Processing Raw EEG

LabVIEW is graphical programming environment. Programs in IDE are called Virtual Instruments (VIs), consists of a Block Diagram (BD) and a Front Panel (FP). A BD provides a graphical code development environment whereas a FP allows the user to interact with a VI. It provides an efficient and easy-to-use environment for code development especially when the user needs to interact with the program and visualize the results. Unlike text-based programming languages like C which follow a control flow execution model, the environment of programming follows a dataflow execution model.

After an extensive and exceedingly informative session with Dr. Praveen Kumar of Tranquil Minds Psychiatric clinic of SIMHANS (Samhita Institute of Mental Health and Neuro Sciences Pvt. Ltd.) in Hyderabad, one comes to understand that Scalp EEG picked up from sensors placed on the forehead is in actuality a summation of brainwaves of different frequencies and artifacts such muscle activity, eye blinks, pulse signals and line noise. Extracting useful artifacts from raw EEG signal and transforming it into a useful control output is done by processing it in LabVEW. The FP provides for a useful tool for providing Neurofeedback to the user.

- 3.1 Blink artifact segregation and processing

The input raw EEG as mentioned above also includes the EOG, which basically can be termed as wavelets with larger amplitudes due to muscle related movements in the ocular region. Since the acquisition is done in real time, thresholding the amplitudes pertaining to the eye blinks and counting the time intervals between consecutive eye blinks has worked efficiently in counting two and three consecutive eye blinks and also eliminating the effect of normal eye blinks. The following part of the block diagram describes the analysis of raw data, thresholding, and outputting the number of blinks on visual feedback which is further utilized in controlling the device.

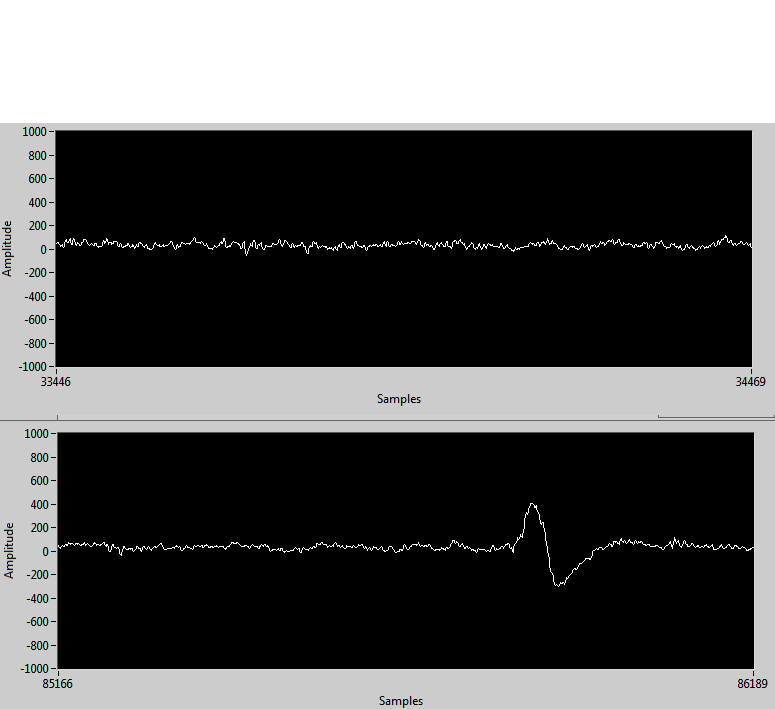

Blinking causes a spike in the EEG of appreciable magnitude as compared to base signal without the blink artifact. This enables us to detect eye blinks by thresholding the EEG signal.

Also, the time difference between consecutive eye blinks can also be found out with simple programming instructions involving timed looping statements and shift registers to send data between loops in LabVIEW. A clear momentary spike can be seen in fig 3.1 where a blink occurred as opposed to a clear EEG waveform with no physical movements occurring.

Fig3.1 blink occurring in the second waveform chart.

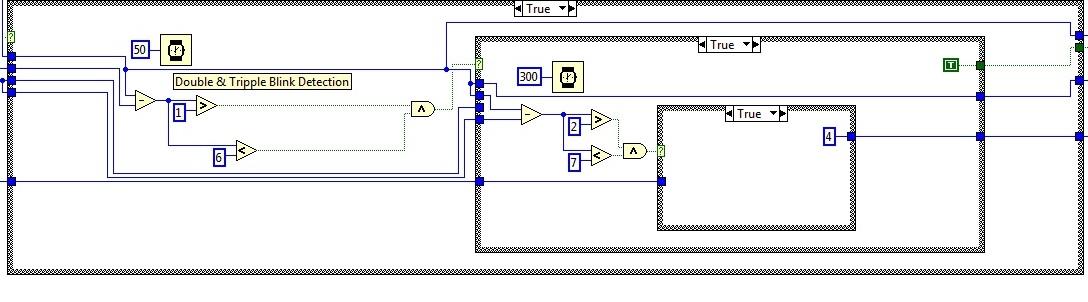

When a single blink of magnitude more than that set by a certain threshold occurs, a counter starts counting the number of milliseconds before another blink occurs. If the blinks occur within a certain time limit, it is counted as a double blink. In a similar manner, triple blinks are also detected.

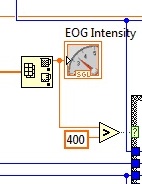

Experimentally, it has been found that voluntary blinking produces a deflection of magnitude 400 micro volts and above, thus this is used as a threshold for blink detection.

Fig3.2 Thresholding blink intensity

Therefore, essentially, only forced voluntary blinks are detected and natural blinks are overlooked. Fig 3.3 shows the blink detecting code for double and triple blinks.

Fig3.3 Double and triple blink detection VI

3.2 Attention Level Separation and Mapping

As mentioned above, raw EEG data is essentially a summation of various brain wave oscillations and artifacts. Some oscillations of notable importance are as follows:

1. Alpha waves: Frequency ranging from 3- 13 Hz and corresponds to meditation levels in the brain activity.

2. Beta waves: Frequencies ranging from 12 – 30 Hz and these beta states are the states corresponding to normal waking consciousness.

3. Theta waves: 4 hz- 7 Hz.

4. Delta waves: Frequencies less than 3 Hz.

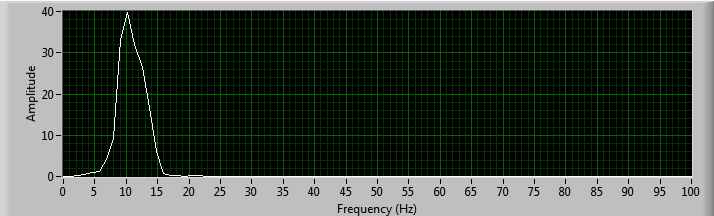

The beta wave in particular can be attributed to the “concentration” part of the brain activity and this is what is being used to control the output magnitude. The beta wave magnitude is deduced by first building a waveform 450 samples long by inserting samples sent by the BCI point-by point into an array and then using an elliptic bandpass filter and the power spectrums are respectively calculated after using FFT on the input signal as shown in fig.3.4. Similarly meditation which corresponds to alpha wave intensity can also be deduced. The power spectrum for alpha wave is shown in fig 3.5

Fig3.4 Beta wave intensity extraction

Fig3.5 power spectrum output for Alpha wave(meditation)

The power spectrum is put on a 0 to 100 numeric scale. This scaling is then converted to a 0 to 255 (8 bit resolution) scale since the control output magnitude output needs an 8 bit input to function properly. This is done by the equation 3.1

(x - in_min)*(out_max - out_min / in_max - in_min)+out_min (3.1)

Where x is the value to be scaled, in_min and out_min are the minimum input and output values respectively and in_max and out_max Are the maximum input and output values respectively.

On the 0-100 scale, a beta level reading of 40-60 is considered normal. Control output should only be sent to the actuators for elated values of attention or concentration, thus a numeric threshold is compared with the attention level, above which, the final control output would be sent.

3.3 Nurofeedback through LabVIEW FrontPanel

Neurofeedback, also called biofeedback, is a treatment where EEG equipment is used to present real-time records of brain activity. This enables to training yourself, for instance to become more concentrated or relaxed, by altering your brain wave patterns over time. Feedback about your concentration level is for instance given as a graph, illustrated in fig 3.6

Fig3.6 NeuroFeedback using a blue bar graph

The subjects task is usually to keep the bar above a certain threshold, indicated here by the horizontal black line. Typically one training sessions like this last for about 45-60 minutes and needs to be repeated to get the wanted effect, variating from 25-50 times depending on the patient (Heinrich, Gevensleven, & Strehl,

2007).

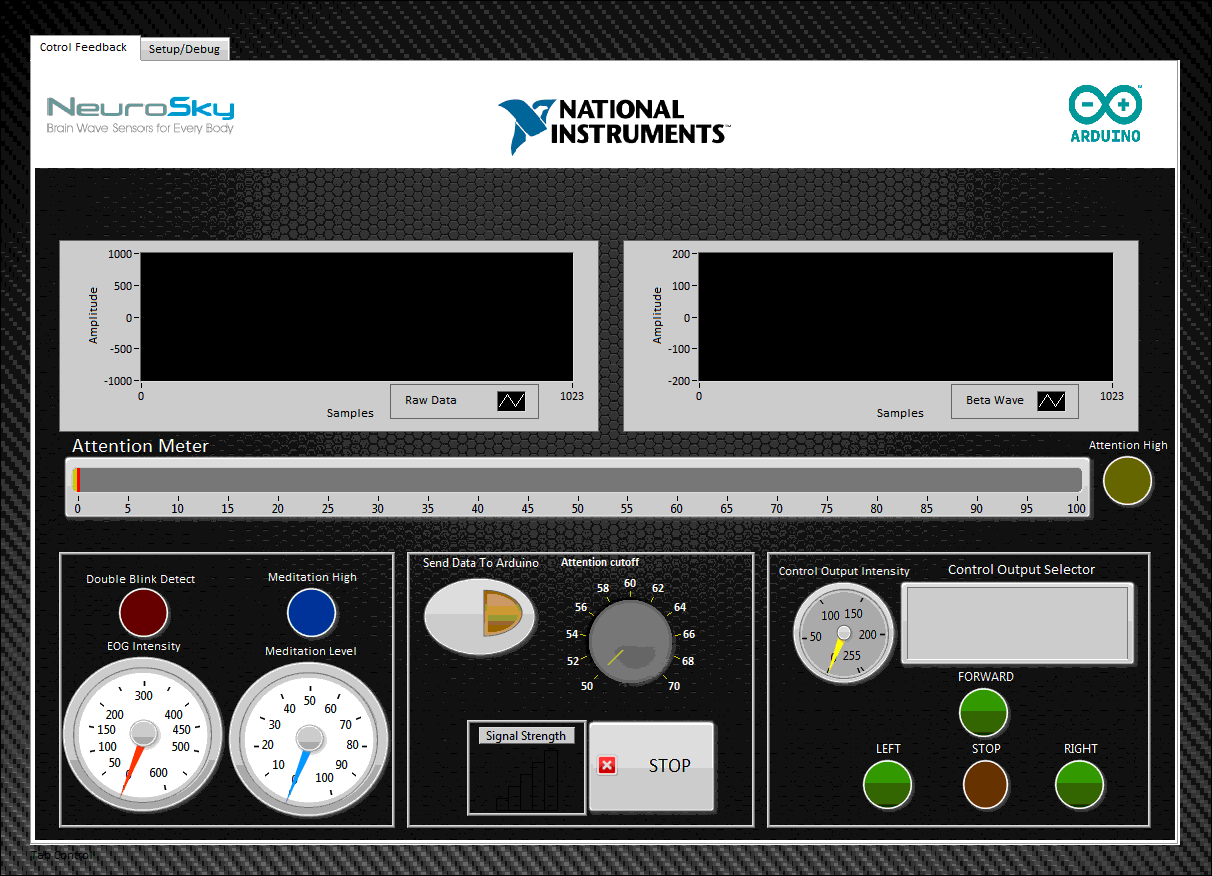

LabVIEW FP provides for an excellent way to implement neurofeedback. Along with attention and meditation levels, EOG reading, raw EEG reading along with the control output selection can be outputted in a user friendly way. Fig 3.7 shows the final LabVIEW FP implemented.

Fig3.7 NeuroFeedback using a LabVIEW FrontPanel

Control Output Generation

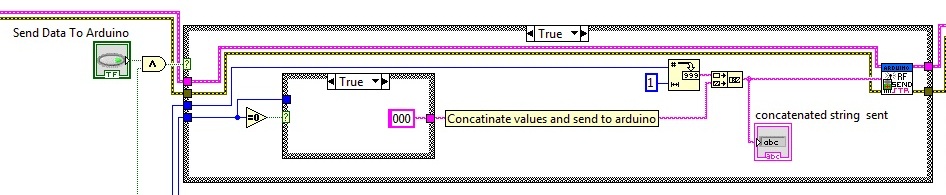

The multiple blink information and attention magnitude data is used for the control output. The system is set up to control motion in a 2-D axis where double blinks are used to cycle between forward, left and right. Triple blink is for stoping the output. A integer is assigned for each direction. The magnitude of motion is decided by the attention levels in the currently selected direction.

The direction and magnitude information in concatenated into a string with the first character pertaining to the direction and three characters following pertaining to the magnitude of movement in 8 bit resolution. This information is sent to a Arduino microcontroller board serially. The code fragment can be seen in fig 4.1.

Fig3.7 Send concatenated string to Arduino

The arduino to which the VI is sending information to serially is connected to a RF transmitter that transmits the concatenated string to the robot to be controlled. The code running on the robot separated the two parts of the string and using switch-case statements is able to drive the robot in a differential drive manner. Allowing for easy wireless control.

ACKNOWLEDGMENT

We would like to thank our friends and family for helping us fund this project and supporting us along the way. We would also like to thank Dr. Praveen Kumar of Tranquil Minds Psychiatric clinic of SIMHANS (Samhita Institute of Mental Health and Neuro Sciences Pvt. Ltd.) for taking the time and explaining us the various aspects on a BCI and also introducing us to the possibility of this project.

REFERENCES

[1] Heinrich, H., Gevensleven, H., & Strehl, U. (2007). Annotation: Neurofeedback -train your brain to train behaviour. Journal of Child Psychology and Psychiatry,48:1, 3–16.

[2] Larsen, E. A. (2010). Playstation Controlled by Brain Waves (Tech. Rep.). Trondheim.

[3] Mitsar. (2008). BIOFEEDBACK TRAINER MITSAR-BFB. pdf available at http://www.mitsar-medical.com/download/promo/Mitsar-BFB 2008.pdf.

[4] Mitsar. (2010). Mitsar Co. Ltd. Medical equipment for functional diagnostics and neurofeedback. Retrieved from http://www.mitsar-medical.com/.

[5] Neurosky. (n.d.). Neurosky. Available from http://www.neurosky.com/

[6] NeuroSky, I. A. (2010). ThinkGear Socket Protocol (Tech. Rep.). Available from http://www.neurosky.com

[7] Reuderink, B. (2008b). Games and brain-computer interfaces: The state of the art. WP2

[8] Schalk, G., McFarland, D. J., Hinterberger, T., Birbaumer, N., & Wolpaw,

J. R. (2004, June). BCI2000: a general-purpose braincomputer

interface (BCI) system. (Vol. 51) (No. 6). Available from

http://www.ncbi.nlm.nih.gov/pubmed/15188875

[9] SmartBrain. (n.d.). Biofeedback. Retrieved december 2010, from

http://www.smartbraintech.com/.

[10] S. Delsanto, F. Lamberti and B. Montrucchio, "Automatic ocular artifact rejection based on independent component analysis and eyeblink detection," IEEE EMBS Conference on Neural Engineering, pp. 309-312, 2003.

[11] I.Daubechies, Ten Lectures on Wavelets, New York: SIAM, 1992.

[12] V. J. Samar, A. Bopardikar, R. Rao, and K. Swartz, "Wavelet analysis of neuroelectric waveforms: a conceptual tutorial," Brain and language, vol. 66, pp. 7- 60, Jan 1999.

[13] V. Krishnaveni, S. Jayaraman, L. Anitha and K. Ramadoss, "Removal of ocular artifacts from EEG using adaptive thresholding of wavelet coefficients," J. Neural Eng., vol. 3, pp. 338-346, Dec. 2006.

[14] Croft RJ, Barry RJ “Removal of ocular artifact from the EEG: a review” Clinical Neurophysiology, 30(1), pp 5-19, 2000

. [15] S.Venkata Ramanan, J.S.Sahambi, N.V.Kalpakam , “A Novel Wavelet Based Technique for Detection and De-Noising of Ocular Artifact in Normal and Epileptic Electroencephalogram” BICS 2004.

[16] Micheal, Arduino Cookbook, 3rd ed., vol. 2, pp.68-73.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report to a Moderator

this is super awesome!!! must see the video!!!!

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report to a Moderator

This really is awesome.The Report gives a clear idea of what it can be.Research of this kind has to be encouraged in India.This project I think is aimed at very high level and also at day to day use.God has given me only two hands.,i can't do this much with these two hands....well..here comes the mind controlled bot..It makes you perform things n a better and efficient way.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report to a Moderator

And this is just the start, there are more advanced brain control interfaces in the medical sector, applying them to more vast applications than just sticking to neural rehabilitation or activity mapping is the answer. if you search youtube, there is one research done abroad, where the sensor is places right above the motor cortex in the brain, at such close proximity, signals going to different parts of the body can be discreatly picked up before it is even sent! it is believed if thinking about movement causes the motor cortex to fire neutrons.

The possibilities are endless.Equipment exists, all that is needed is more research.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report to a Moderator

This is intriguing and opens doors to just so many possibilities.

Will it not be a gift to all of those who are not in a position to use the limbs - to use such technology to get over the hurdles !

May this project be an honest beginning and hoping that the inspiration is carried forward to research more and develop more. Good luck !

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report to a Moderator

Thankyou for your support, You can help us by spreading the message ![]()

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report to a Moderator

hey it's really master mind ya

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report to a Moderator

Fantastic! I can not see the video, may you send another URL for this video to me? Thanks! anjijiajia@163.com

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report to a Moderator

This is brilliant - You've done a really good job using both LabVIEW and Arduino. I am currently working on my own EEG system and can appreciate that this can't have been a very simple task to manage!

Applications Engineer

National Instruments UK & Ireland

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report to a Moderator

its the starting point to future engineering,,, its really awesome...

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report to a Moderator

hello .... i am also doing a project centered around eeg ...! we are a still confused over the acquisition system that we want to make ... can u help me with that ?

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report to a Moderator

can i get those vi's please i want to try implementing them pls do share those vi's

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report to a Moderator

Hi! I´m trying to realize an interconection labview-arduino-neurosky, but I have a problem to run my VI. If I run a VI whit labview-arduino application, or labview-neurosky, they works well separately, but if I try to run a VI that includes both, only works the labview-neurosky application, and appear a message of error like this: VISA Clear in LabVIEW Interface for Arduino.lvlib:Init.vi.

I hope you can help me whit my problem. Thanks!

Josue Mtz

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report to a Moderator

Excellent work. Would you be sharing the VI.

Sam

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report to a Moderator

can you tell the cost of the project and the mindwave headband,as i want to use the same for my project.

reply as soon as possible

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report to a Moderator

Could disponibilize the VI for download or send me it? I'm doing a research using the headset and it would help me a lot as an example. My email is fernandoseitim@gmail.com

Grateful!

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report to a Moderator

hey can u please send the vi file for my mail-samjivatsa@gmail.com coz im doing d similar thing in ecg and ur vi would help me a lot.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report to a Moderator

It's really an interesting project @Vjvarada.

I'll do this as my final year project, can u assist me in sending further details to madhukrishna08@gmail.com . Please do help me with the abstract, components required and the code.

Thank You in advance!

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report to a Moderator

I'm trying to realize un project with lego ev3 and mindwave, can you help me ? praiaamare@gmail.com

Thank you in advance

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report to a Moderator

Hello 😃 , would you plz send me the .Vi file ? i am doing similar thing with EOG and your program would be pretty helpful to me

My email : Mayar.mohamedmedhat@gmail.com

Thanks alot !

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report to a Moderator

Hi I am also working on a similar project - am a university student, would love to see your VI file? Did you use LINX in lab view to interface with arduino? I want to take EOG data from an Open BCI board and turn it into output to an arduino to make a 3dprinted eyeball move up and down on servos... do you have any advice on how to do this simply?

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report to a Moderator

Hello,

i am sorry i don't have the .vi file....i tried parsing the data using

the labVIEW but i get statisfing results so i switched to C# and the

results are better i believe .

what are you willing to do using the neurosky ? i mean do want to get

the eyeblinks or attention , etc .... ??

Kind regard.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report to a Moderator

sorry i didn't notice you already wrote eyeblink.

Okay well , u can actually get the eyeblink strenght using the C# .You

can use the eyeblink strenght to differentiate between the forced

eyeblink (the one you mean it) and the other ones .....is that what

you need ?

if yes i can tell you how to do that, and then u can easliy link the

C# and LabVIEW or the C# and the Arduino

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report to a Moderator

I have used intentional single blink for stopping robot and continuos blink

for moving in reverse direction.

i'm working on matlab. can u suggest algortihms for left and right motions.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report to a Moderator

Hi mm93,

Thank you so much for the response! I had wanted to just use the upward eye

movement to move an animatronic eyeball upward and the downward eye

movement to move it downward, but if I can use a forced eye blink to make

the animatronic eye "blink" that would be cool too, whatever you can offer

is great! I don't have C# (is that a programming language or a program I

should download?)

Thanks!

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report to a Moderator

sir, i'm doing my project in with the brainsense headband..i'm trying to connect brainsense with labview..but i didn't connected till now...pls sent this program to my mail vinothmtech10@gmail.com.pls reply as soon as possible.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report to a Moderator

Hi, I am currently working on a project involving use of the mindwave headset. If possible could you send me your VI to

matt-curran@hotmail.com it would be greatly appreciated.

Thanks in advance.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report to a Moderator

can u plz send thz Vi file on my email...i need ur help...shall b very thankful to u..thanx in advnce

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report to a Moderator

Sir i am student of B.tech can you please provide me the complete VI so that i can understand this work and try to do it.

It will be a great help

my contact no is +91-7533019094

mail id: acmvarunchib@gmail.com

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report to a Moderator

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report to a Moderator

Has anyone recieved the VI for this project?

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report to a Moderator

please..kindly upload the VIs for us to download. thanks in advance. and a more detailed report if possible

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report to a Moderator

Hi all!

I thank you all so much for your responses, It has been quite some time since I did this project and don't remember much

i have put up all the Vi's and Sub Vi's. I hope you guys would be able to piece it all together. I dont think ive mentioned it but, i have an arduino connected serially to labview communicating over LVIFA, that also has an rf transmitter that controlled the robot wirelessly

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report to a Moderator

I can not find the attached VIs please can you help me

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report to a Moderator

Thanks for sharing the vis,"send String RF SubVI.vi" is missing in the vi shared, could you share that vi also.

Thanks again.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report to a Moderator

Hi guys, could you check if it works now? as @satinder said, ive attached said subVI

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report to a Moderator

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report to a Moderator

As well as beta intensity extraction vi. Thanks

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report to a Moderator

Sorry my bad, I've downloaded it. But I have an error when about to run vi which says "call library function node 'thinkgear.dll:TG_getValue": library not found or failed to load. Kindly help me with this. I'm sure I've installed the thinkgear driver. Does anyone have a fix for this error??

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report to a Moderator

okayive found a fix. the thinkgear works with only 32 bit labview

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report to a Moderator

Please Hiw did you interface the arduino to the robot so that it could be controlled?? thanks in advanced

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report to a Moderator

Sir, some icons are missing in your labVIEW mastermind project program while opening it on my laptop, could you help us in this regard

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report to a Moderator

I'd like to see the video... can you share? sergioaer@gmail.com